PROVIDENCE, R.I. [Brown University] —New software created by Brown University computer scientists could help website owners and developers easily determine what parts of a page are grabbing a user’s eye.

The software, called WebGazer.js, turns integrated computer webcams into eye-trackers that can infer where on a webpage a user is looking. The software can be added to any website with just a few lines of code and runs on the user’s browser. The user’s permission is required to access the webcam, and no video is shared. Only the location of the user’s gaze is reported back to the website in real time.

“We see this as a democratization of eye-tracking,” said Alexandra Papoutsaki, a Brown University graduate student who led the development of the software. “Anyone can add WebGazer to their site and get a much richer set of analytics compared to just tracking clicks or cursor movements.”

Papoutsaki and her colleagues will present a paper describing the software in July at the International Joint Conference on Artificial Intelligence. The software code is freely available to anyone who wants it at http://webgazer.cs.brown.edu/.

The use of eye tracking for web analytics isn’t new, but such studies nearly always require standalone eye-tracking devices that often cost tens of thousands of dollars. The studies are generally done in a lab setting, with users forced to hold their heads a certain distance from a monitor or wear a headset.

“We’re using the webcams that are already integrated in users’ computers, which eliminates the cost factor,” Papoutsaki said. “And it’s more naturalistic in the sense that we observe people in the real environment instead of in a lab setting.”

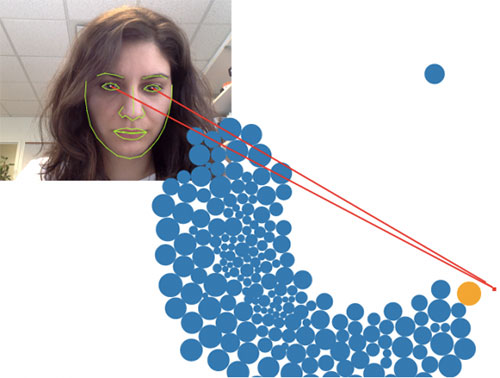

When the code is embedded on a website, it prompts users to give permission to access their webcams. Once permission is given, the software employs a face-detection library to locate the user’s face and eyes. The system converts the image to black and white, which enables it to distinguish the sclera (the whites of the eyes) from the iris.

Having located the iris, the system employs a statistical model that is calibrated by the user’s clicks and cursor movements. The model assumes that a user looks at the spot where they click, so each click tells the model what the eye looks like when it’s viewing a particular spot. It takes about three clicks to get a reasonable calibration, after which the model can accurately infer the location of the user’s gaze in real time.

Papoutsaki and her colleagues performed a series of experiments to evaluate the system. They showed that it can infer gaze location within 100 to 200 screen pixels. “That’s not as accurate as specialized commercial eye trackers, but it still gives you a very good estimation of where the user is looking,” Papoutsaki said.

She and her colleagues envision this as a tool that can help website owners to prioritize popular or eye-catching content, optimize a page’s usability, as well as place and price advertising space.

A newspaper website, for example, “could learn what articles you read on a page, how long you read them and in what order,” said Jeff Huang, an assistant professor of computer science at Brown and co-developer of the software. Another application, the researchers said, might be evaluating how students use content in massive open online courses (MOOCs).

As the team continues to refine the software, they envision broader potential applications down the road — perhaps in eye-controlled gaming or helping people with physical impairments to navigate the web.

“Our purpose here was to give the tool both to the scientific community and to developers and owners of websites and see how they choose to adopt it,” Papoutsaki said.

The work was supported by the National Science Foundation.