PROVIDENCE, R.I. [Brown University] — Many brain training games claim to improve mental performance, but a growing body of cognitive research shows that while participants get better on a game’s specific tasks, the benefits do not transfer to real-life skills such as remembering what to pick up from the grocery store.

“This challenge of transferring improvements on specific, simple cognitive tasks to complex, everyday tasks has been called the ‘curse of specificity’,” said Takeo Watanabe, a professor of cognitive, linguistic and psychological sciences at Brown University.

This curse hinders applying findings from basic cognitive science research to help patients, Watanabe added. But while studying a fundamental type of learning called visual perceptual learning, where neurons in the primary visual part of the brain are involved in learning specific features of objects, Watanbe and colleagues were surprised to find they could get learning transfer by leveraging a higher brain process: categorization.

The findings were published on Thursday, March 28, in the journal Current Biology.

“Categorization is the process the brain uses to determine if, for example, an image is a snake or a stick, and it generally occurs in a higher brain region,” said co-author Yuka Sasaki, a professor of cognitive, linguistic and psychological sciences at Brown. “Doing categorization before the visual perceptual learning tasks was a simple idea, but the effect of transfer on that level is huge.”

Watanbe Lab / Brown University

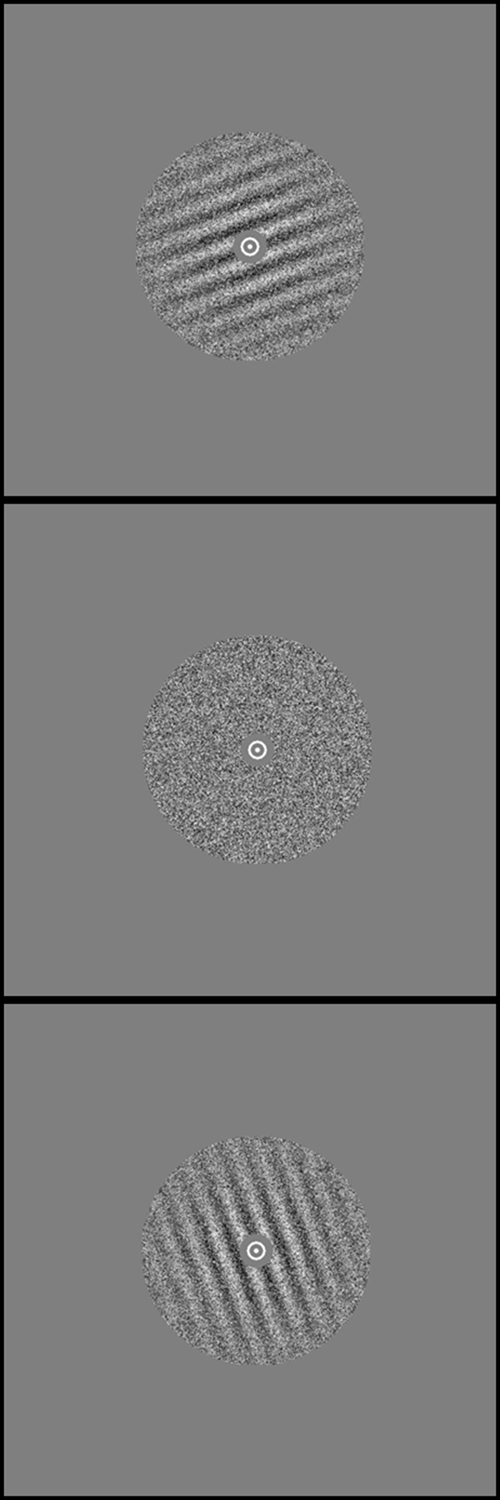

First, the research team taught participants to categorize blurry striped circles, called Gabor patches, into two arbitrary groups of angles. Then, for five days in a row, the participants practiced recognizing Gabor patches of one angle in contrast to circles of random dots.

After that, the researchers tested how much the participants improved in recognizing patches of the trained angle, patches with angles in the same category and patches in the other category. The participants improved most in recognizing patches of the same angle they trained on, but they also significantly improved in recognizing patches in the same category. If the participants didn’t learn the arbitrary categories first, they only improved at recognizing patches with the trained angle.

The researchers used the artificial Gabor patches because they are easy to tweak, said Sasaki, who with Watanabe is affiliated with Brown’s Carney Institute for Brain Science. The researchers can change the angle of the stripes or the amount of noise that blurs the stripes to see if those factors impact visual learning. The experiment included a dozen participants, primarily undergraduate and graduate students with normal or corrected-to-normal vision. The no-categorization control had seven participants.

“Usually learning is very specific, but categorization — which happens often in our daily lives — can make transfer possible,” Sasaki said. “We think that this finding could be especially useful for clinical purposes. You won’t have to train patients on every possible combination of stimuli.”

For example, patients suffering from macular degeneration, the most common cause of age-related vision loss, or another type of visual degradation could be trained to recognize an object, say a cup, against a background of the same color, Watanbe said. The patients’ improvements in recognizing a cup of a specific color or specific shape could potentially generalize to other objects belonging to the “cup” category.

In addition to the clinical implications, the researchers said the findings were significant because they showed that a higher brain process — categorization — impacted visual perceptual learning, a lower-level brain process, through top-down processing in which signals flow from higher to lower brain areas.

The research team would like to test the top-down processing model, perhaps using functional magnetic resonance imaging, to non-invasively see brain activity as the participants undergo visual perceptual learning training and testing, Sasaki said. They would also like to test visual perceptual learning of features on natural objects, say a photo of a snake, instead of Gabor patches, Watanbe added.

“Snakes have some specific features: the elongated body plan, the texture of scales, even stripes of specific colors,” Watanbe said. “The sensitivity of these features could be learned and since the creature is categorized as a snake, perhaps some of this learning will transfer to another type of snake even if the stripes or some other feature are not exactly the same, which in many cases would be good for our survival.”

In addition to Watanbe and Saski, other authors from Brown include Qingleng Tan and Zhiyan Wang.

The National Institutes of Health's National Eye Institute (grants R01 EY019466, R01 EY027841 and R21 EY028329) and the United States-Israel Binational Science Foundation supported the research.